Thanks to Mellanox Technologies, our tool that designs fat-tree and torus networks now operates with real life prices for InfiniBand hardware. Mellanox kindly provided list prices for the previous generation of switches, InfiniBand QDR: these figures are not likely to change. The three switches are: Grid Director™ 4036 (36 ports), IS5100 Chassis Switch (18..108 ports) and IS5200 Chassis Switch (18..216 ports).

Remember the two things: (a) prices are for QDR InfiniBand hardware; for the most recent prices and the current InfiniBand FDR hardware, please contact Mellanox; (b) you can always download the tool and supply it with your own prices.

The main advantage of our fat-tree design tool is that it tries all possible configurations of modular (chassis) switches, including those where only some line cards are installed, hence recommending the most cost-efficient designs. Additionally, you can specify to the tool if your network must be expandable in the future, and up to how many hosts.

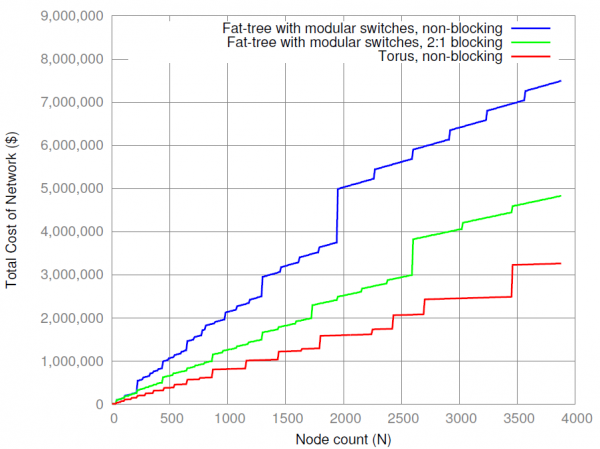

I decided to use the newly available prices to objectively compare costs of the following networks: non-blocking and 2:1 blocking fat-trees and torus networks.

With the aforementioned two switches in the tool’s database, we can design non-blocking fat-tree networks with up to 36*216/2=3,888 nodes. (This is a very decent supercomputer by today’s measures). The graph, produced from the tool’s output, indicates that torus networks are absolute leaders in terms of cost — with the drawback that they are inherently blocking. Additionally, using a blocking fat-tree network also helps to save money, compared to a non-blocking implementation. But you should not forget that blocking networks are not suitable for all applications.

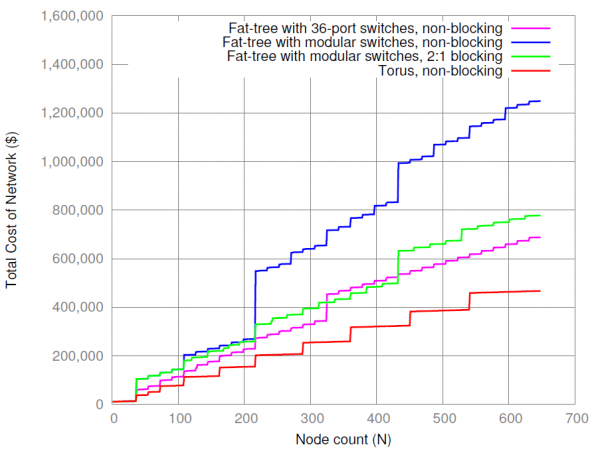

Fat-trees on the previous graph only used modular (chassis) switches on the core level: such configurations are easier to manage and expand. But modular switches are more expensive with regard to per-port cost. The alternative is to use simple 36-port switches on the core level. With the same 36-port switches on both the edge and core levels, we can only build networks with 36*36/2=648 hosts. Let’s see if we can save some money this way. The figure below is essentially a close-up of the previous graph, for the region of up to 648 nodes, and the additional magenta line shows the cost of networks built with 36-port switches on both levels:

As can be seen, starting from 216 nodes, this type of network is 40..45% cheaper than a non-blocking fat-tree with modular switches on the core level. However, when approaching the limit of 648 nodes, even though the network cost stays lower, the drawbacks will begin to show up: (a) such networks are not easily expandable, (b) they have more hardware items to manage, (c) cables between edge and core layers do not run in bundles, which further adds complexity to installation and maintenance.

We therefore recommend to use modular switches on the core layer. This is the default configuration of our fat-tree design tool (however, you can change it any time for custom designs).

For even larger installations, you can use modular switches on the edge layer as well, as featured in the “SuperMUC” computer. This will further decrease the overall number of switches and associated management complexity.

Are you generating through our cluster config? http://www.mellanox.com/clusterconfig/

Hi, Brian,

No, as the blog post states, I use my own tool. The link is in the very first line of the blog post. Also, you can download the tool and run it on your local computer. It can design torus networks as well.

I don’t know the algorithm inside the Mellanox’s configurator, but the results appear pretty similar to my tool.

gotcha. nice!